|

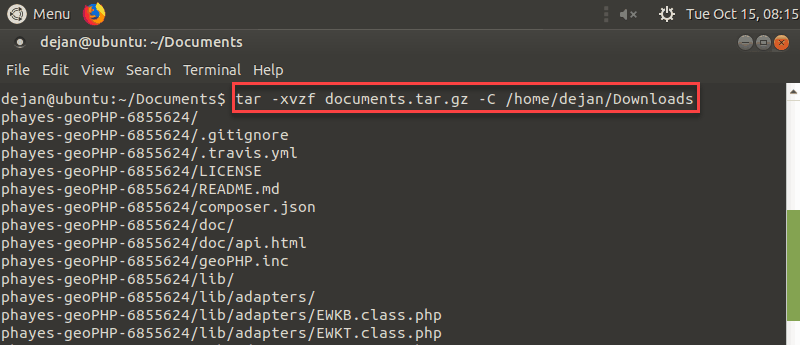

The “XZ” part is from a compression utility developed in 2005 that was meant to be an upgrade over the more common ZIP format. They are created by utilizing the “tar” command on Linux or UNIX operating systems, which is where the file type got its name. This makes it easier to share the files through your network, upload them to a website, or store them on a storage medium for safekeeping.Ī TAR.XZ file is a lossless data compression file format used for compressed streams. It’s a compression archive much like ZIP, 7z, or RAR files and it’s used to compact your files and folders so they take up less space on your hard drive.

What Is TAR.XZ?ĭespite this file type being relatively rare nowadays, it has actually been around as far back as the 1980s. In this guide, you’ll learn a little more about what a TAR.XZ file is and we’ll provide you with the best methods to recover files of this format in case they’re ever missing or compromised for whatever reason. TAR.XZ files are prone to accidental deletion or data loss, leaving you scrambling to retrieve your important information. Just like any other information on your computer. With two consecutive buffers, the pipe could keep downloading and extracting for a while in the background.īut without a buffer, the whole pipe would immediately slow down at once, and would rarely ever reach full speed may not be as common as some of the other compression archives out there, but it’s an excellent way to reduce the size of some of your files so you can store them away for later use. Even if it didn't, without a buffer the whole pipe is sensitive to slowdowns.įor example, when flashing directly from the internet, I found that some USB devices occasionally did a flush or something, and that causes a periodic slowdown. I'm pretty sure that a simple '>' would do it one bit at a time. What if you dont even use dd, just `wget. (and when you want to have the world's fastest img downloader, every second counts.)Īdditionally, in my script I go a couple steps further by loading the first 100MB of the img into cache first, and also it uses the buffer package to overcome I/O bottlenecks for maximum efficiency. It usually cut down on download time by around 3 seconds.

In my tests, I found it gives a bit of a buffer. The cp command (which is very fast in my tests) uses just 128KB block size (see strace cp.

Just for my interest, why are you using "bs=10M" for the dd command? Now I just use "cp" - its simple to use and fast enough in practice (slightly faster than dd bs=1m) I gave up trying to calculate everything and produce an optimal C program! So, given that the disk read times are near zero for this case only, whats the best read/write block size to give dd for the optimal overlap between reads and writes?

įor example, I usually download the image to a memory disk, then uncompress it and copy it to the raw device. Given all the above variables, any simple benchmark results will be meaningless. Its incredibly complicated given: different read/download speeds, decompression speeds, indeterminate overlap between reads and writes, disk write speeds, variable asynchronous disk block prefetch ranges, "sync" time, verification time, etc etc Believe it or not, with enough optimization, it can be faster to download an img, extract it, and flash it to a SD card, than to flash a local, pre-extracted.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed